March 6, 2019 – By Mark Heymann, Chairman & CEO, UniFocus, Published in Global Business and Organizational Excellence – For many in the service industry, the availability of big data is overwhelming. With volumes of information available at the push of a button, managers are awash in statistics, but not always sure of how useful they are. A shift from viewing data in terms of absolute numbers to understanding it in terms of cause and effect can help organizational leaders move the needle from merely measuring performance to creating the conditions to guarantee optimal outcomes. Applicable to all industries, such an approach filters out extraneous information while taking into account and correlating essential variables, such as competition, market conditions, and customer touch points, that have a significant impact on the bottom line.

1 | INTRODUCTION

Long before big data was a big deal, the managers at an international hotel group based in the United States were focused on measuring housekeeping performance. For years, one of the metrics they had used to assess productivity was revenue per hour—a measure introduced to the organization by executives who had previously operated fast-food restaurants. At the time, competition in the hospitality industry was not as fierce as it is today, and there was more consistency in room rates. As the environment grew more competitive, however, rates began to markedly fluctuate. This caused the productivity measure they had come to rely on to yield information that varied in relation to changes in average rates. The organization tried to address this variability by assessing the revenue-per-hour metric according to average rate—in other words, by flex- ing the goals.

The management team had a vested interest in maintaining this criterion. But as they began to analyze the metric, they discovered that revenue was not as relevant a data point to assessing housekeeping performance as the number of occupied rooms that needed to be cleaned. Rate strategy affected occupancy, but once a room was sold, it needed to be cleaned no matter what the rate. After much discussion and confirmation of data, the team changed the performance metric to reflect hours per occupied room. This turned out to be a fairer measure and, as a result, management account- ability improved.

In this particular instance, it was easy to convince the organization’s leaders to make the suggested change. Some changes involving performance assessment, however, are much more difficult to implement. Nonetheless, the lesson at the heart of this example—particularly now, when managers have more data at their fingertips than ever before—is to frequently evaluate the information on which decisions are based to determine whether there might be better data avail- able to analyze in order to achieve business goals.

Managers today have access to a massive influx of data from a wide range of sources, which gets corralled into daily, weekly, and monthly reports in an attempt to organize and make sense of it all. But too many organizations get bogged down in the routine of generating reports and looking at the numbers without taking the time to understand what those numbers are telling them.

Too often, the problem compounds over time as new managers replace old ones and add their own data requests to what the company is already tracking. The wiser approach would be to step back and ask, “What do we want to achieve with the data? What are the data points that support the issues paramount to our business today? At which interval should the data be reviewed in order to obtain the best insight?” The goal should be to retain the metrics that truly matter, and disregard the rest.

2 | THE DOWNSIDE OF ABSOLUTE NUMBERS

How does an organizational leader best interpret the data? The standard practice for many service industry businesses is to compare team performance projections with absolute numerical results. As the following examples—all from the hospitality industry but relevant across the service sector— illustrate, however, absolute numbers don’t always tell the whole story.

- One hotel group rated the profitability of room operations at each of its properties from high to A hotel with a measurably higher average room rate than others in the group consistently ranked first. When group leaders normalized the data for the rate difference, however, that hotel dropped out of the top five highest ranking properties.

- A very large hotel with a high vacancy rate was operating only at a 65% rooms division profit. An organization seeking to rent 250 rooms for a 60-day period at a cost of no more than $35 per room per night requested a proposal with the stipulation that the rooms be cleaned once a week, as opposed to Applying a traditional formula of annual rooms profit percentage based on total projected revenue, the hotel’s managers decided not to accept the business. Their calculations showed that in receiving only $35 per night, the hotel would make only $86 per room per week. That decision was overturned, however, after the managers considered the pure variable cost of an occupied room (only $8 for housekeeping and $10 to $11 overall) without the inclusion of fixed overhead. Based on this analysis, they realized that they would actually make about $233 per room per week, and not the $86 they originally calculated. Moreover, there was the potential for additional income from occupants’ food and beverage purchases on site. Once managers shifted their focus from absolute numbers and percentage profitability, they were able to accurately assess the true cost per room and accept the business.

- Required to bid out all work, a hotel would also routinely extend the bid process to an in-house group. For each maintenance project, the in-house crew’s bid was consistently higher, so management always awarded the job to an outside service. When the hotel needed paint- ing work done, once again the in-house bid was higher. A closer look at the bid, however, revealed that the in- house group’s quote included its fixed overhead costs, plus the hourly labor of full-time employees who were available to do the painting since they had excess capacity during this As it turned out, the only variables were the cost of paint and a few hours of additional labor here and there. Once management understood that, they never went outside again.

The lesson from these examples is clear: When managers look beyond absolute numbers or perceived total costs and percentages to obtain a complete understanding of the cost equation (variable vs. fixed costs), they make different decisions.

Managers today have access to a massive influx of data from a wide range of sources, which gets corralled into daily, weekly, and monthly reports in an attempt to organize and make sense of it all.

3 | THE VALUE OF COMPARATIVE DATA

Service industry systems yield a great deal of valuable information and, in general, managers understand the value of looking at that data comparatively. Too often, however, they simply compare their firm’s performance to average or industry norms. When benchmarking performance against the average, organizations that demonstrate above-average performance are more likely to rest on their laurels. To ensure continuous improvement, a far more effective strategy would be to compare an organization’s results to those of the industry’s top performers. By benchmarking against the 80th percentile, organizations will discover more opportunities for growth. Making comparisons only to projections, such as budgetary ones, is also problematic, since results are subject to changes in market dynamics, volume, and rate fluctuations.

3.1 | Comparing to potential

In manufacturing, daily production goals are based on needed volume and machine-hour capacity—a relatively simple calculation. By contrast, most service industry operations are subject to daily changes in business volumes, coupled with a service demand pattern that focuses on just-in-time delivery. For the hotel industry, there are a few exceptions—among them, laundry and housekeeping services. Laundry performance is assessed using a pounds-per-hour metric against machine capacity. Similarly, housekeeping can be measured by number of rooms serviced per day. In both areas, fluctuations in customer demand would have little impact on production, since the bulk of their processes—room linens and general room cleaning—are not tied to direct guest demand but actually can be performed in a nonfluctuating setting, much like a manufacturing operation.

Excluding these exceptions, most functions in a service-industry organization are driven by customer interaction, and the timeliness of these demands vary from day to day and within the day. Therefore, they must respond to changes in production needs hour to hour. That makes comparing actual production to budget or industry average (or whatever the percentile) far more challenging. Patterns of demand affect productivity, which makes it all the more important for individual operations to compare their results to what potential performance should be for their particular business unit. Without knowing the capacity for check-in and check-out for a particular operation, none of the occupied rooms data available to managers will help the front desk optimize performance if the focus is limited to comparison with industry averages. The more complex the operation, the more challenging it is to conduct performance comparisons. Therefore, metrics need to accurately represent key drivers and results.

Service industry managers can turn to their daily flash report for a good amount of important data. Those numbers, however, are useful only if they can be compared to a normalized figure reflecting competitive market potential, or to the organization’s own cost potential.

3.2 | New technology facilitates cost calculations

Comparisons to potential, which used to be quite difficult to make in the service industry, have become easier thanks to cost-management technology. And as a result, service industry managers are able to more finely hone their metrics and goals. In a restaurant setting, technology now makes it possible to differentiate food cost by meal period and apply those individual potentials to determine overall potential cost. For example, a 30% monthly food cost was once considered a mark of a high-performing restaurant. Therefore, the managers of a restaurant with a 29% food cost would rate its performance as better than average. But if 70% of that food was served at breakfast (the lowest food cost of the day) rather than at lunch or dinner, then the restaurant’s actual result should probably be closer to 24% or 25%.

In one instance, the managers of a hotel in the Los Angeles area were satisfied with its 29% food cost until they applied comparative technology to assess the percentage of total food revenue from banquets versus à la carte business. The analysis revealed that when banquets accounted for a higher percentage of the total food business, food costs rose. Costs fell when à la carte business prevailed. This ran counter to expectations, because banquet food costs typically are lower. In searching for the reason behind this result, management discovered that menu pricing had not been revised in 3 years. When the hotel brought its prices in line with the market, its food costs dropped by 3.5 points, saving more than half a mil- lion dollars. This analysis would have been more difficult to make without the new technology.

Labor cost potential has also become simpler to calculate across all positions with the aid of today’s technology. In housekeeping, this traditionally entailed a simple one-to-one calculation. If hotel management expects a housekeeper to service 15 rooms at 30 min per room in an eight-hour day (excluding breaks), it is easy to compare the time taken to clean all occupied rooms against all hours expended. In this particular case, the standard hours per room would be .533, or 32 min on average per room.

Comparisons to potential, which used to be quite difficult to make in the service industry, have become easier thanks to cost-management technology.

Determining labor cost potential in food service, on the other hand, is far more complex. There can be one standard for serving and another standard for setting up. To complicate matters further, there’s open capacity, because if a server’s station is only half-full, he or she still must work it. Thanks to more advanced planning and scheduling technology, operators can now combine fixed and variable work to come up with a joint standard based on volume served to determine how many hours should be used versus how many are being used. If the standard is an average of nine minutes per patron for a meal period, they can compare that to actual hours to determine real performance potential.

4 | TRACKING TRENDS

Trending can help organizational managers evaluate performance over time, and is especially helpful when results can be compared to potential. Further, trends that show performance as volume varies can also be useful, especially in work areas that have a fixed plus a variable component. Managers can use those comparisons to determine whether particular areas of their business are on a positive, negative, or neutral trajectory.

That said, if budgeting is done monthly and performance standards are the same for every day of the month, daily trending that compares performance to the budget parameter won’t be of much use. In fact, in such instances daily trending can be counterproductive. Here are a few scenarios for hospitality industry managers to consider:

- A hotel hosting a large social, military, educational, religious, or fraternal group might have a lower average daily rate (ADR) than called for in the budget, but revenue per available room (RevPAR) for the week might be on target. A knee-jerk response to the low ADR trend early in the week or month—for example, changing a short-term rate strategy and increasing rates—might well be counterproductive.

- A hotel might correctly interpret a 10% increase in ADR as a positive But if the trend simply reflects performance during a single strong week and those that follow are not as strong, the hotel needs to avoid getting too comfortable with comparing this simple trending to the budget monthly average.

- Many restaurant managers use revenue-per-hour or percent-of-sales as a daily measure of performance. Dependence on either of these measures is not very useful in daily or even weekly performance assessment, Although lower average checks can make both these numbers look poor, the number of customers that needed to be served will have remained the same. In con- ducting a weekly analysis, a manager who cuts back on staff because a labor percent of sales number is higher than budget through the first four days of a week may end up having a negative impact on service. This, in turn, might lead to a loss of revenue if the staff reduction results in long wait times that prompt customers to dine elsewhere.

Managers should set the frequency of tracking and trend analysis to when the comparative data points are most accurate. An ideal approach might be to look at budget variances and trends monthly, and at market trends or comparisons to market on a weekly or monthly basis. Daily trend analysis, especially as it relates to operating costs, requires metrics that accurately reflect performance versus potential of these daily parameters.

4.1 | Stick to actionable data

Although the incredible volume of data to be considered can be enough to overwhelm the most competent manager, new technology and user-friendly dashboards have simplified the task of quickly acting on crucial information and adapting to it. The key is to identify the data points that are most important to the business, corral them, and let the rest go. The next step is to determine which of those criteria need to be reviewed every day, which by week, and which by month.

Data that require daily review must be accessible at the touch of a button. Managers need to be able to interpret this information quickly and act on it in real time. Therefore, this type of information should be tactical, enable quick operational adjustments, or needed to confirm and reinforce successes. To keep data manageable, no more than five areas should be selected for daily tracking. Labor should be one of them.

4.2 | Accurately assessing labor costs

Comparing actual labor to potential labor is a critical daily metric that helps managers understand how much labor they should be using in relation to actual volumes serviced. In this context, volume might refer to customers, meals produced, transactions, and/or other units, such as occupied rooms and pounds of processed laundry. It is advisable to avoid using revenue as a daily metric in relation to cost performance, except in fast-food or high-volume beverage operations. In these instances, the average price per unit is consistent, which makes a revenue comparison basically the same as a trans- action or unit produced. Focusing on labor can help service industry organizations adjust their staffing needs in order to fulfill both their financial and qualitative performance potential without negatively affecting customer service or revenues.

Thanks to more advanced planning and scheduling technology, operators can now combine fixed and variable work to come up with a joint standard based on volume served to determine how many hours should be used versus how many are being used.

A manufacturing business can produce the same volume every day; therefore, its production rate can be consistent every day. In a dynamic environment, however, volume changes daily. Take the example of a hotel that budgets labor for a 30-day month at $30,000, based on 2,000 h at $15 per hour. That suggests an average of 67 labor hours per day. Breaking the monthly labor budget down by cost, the average would be $7,500 per week, or $1,071 per day. This figure, however, doesn’t accurately reflect reality. Friday and Saturday might be busy days at $1,500, or 100 labor hours, while Sunday, a light day, may entail only $450, or 30 h. But the 30 h doesn’t even cover the base level of staff needed to open the operation.

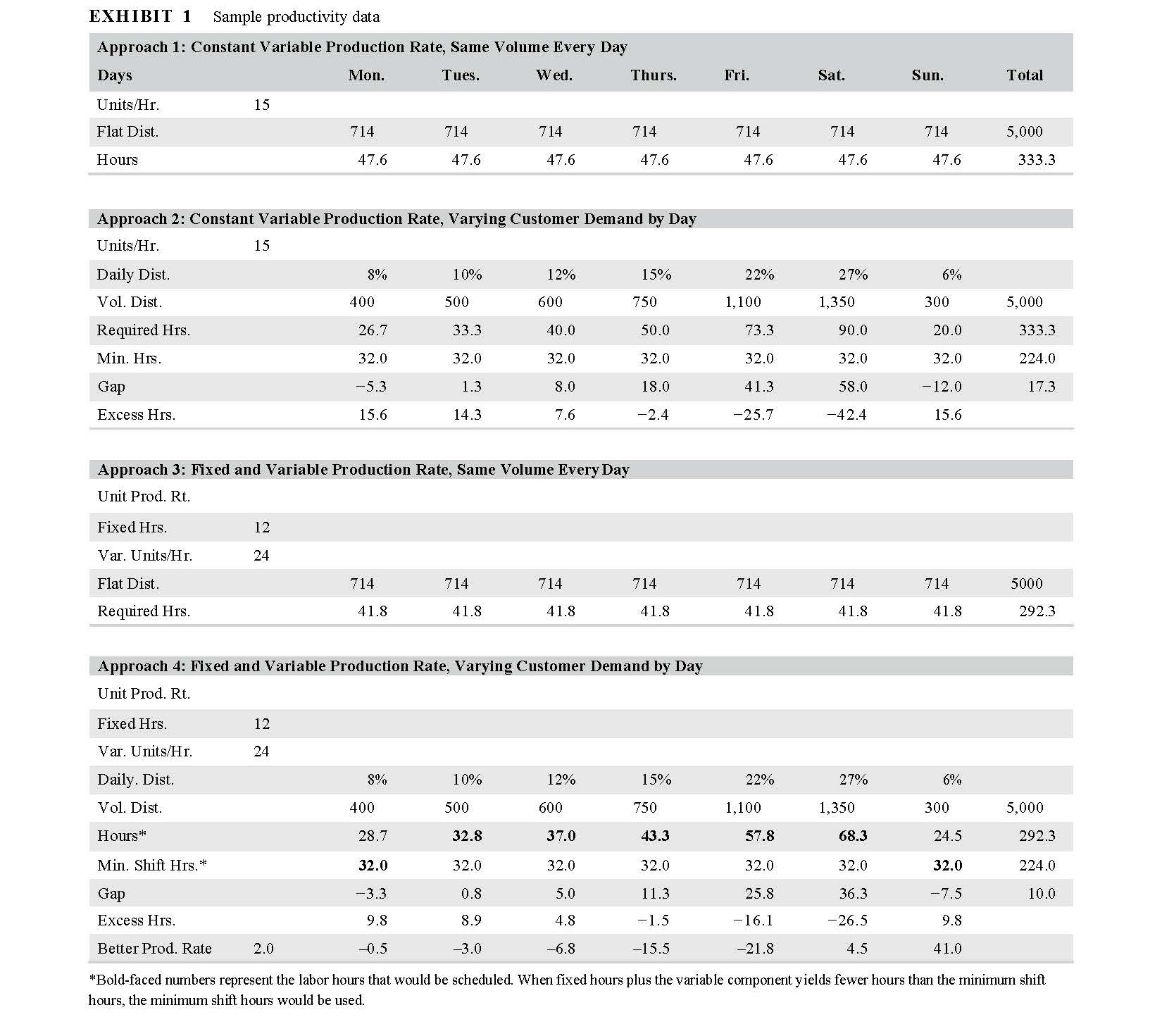

As Exhibit 1 illustrates, Approaches 1 and 2 show the differences between a weekly planning approach that does not account for varying daily volumes of customers (a “flat week”) and one that does, using a single production standard of 15 units per hour. Excess hours represent the gap between equal volume days (Approach 1, similar to manufacturing) and varying volume days (Approach 2, service environment). A positive number in the excess hours line means the flat week has more labor hours than necessary on a specific day, while a negative number in this same line reflects understaffing (short labor hours).

Approaches 3 and 4 in Exhibit 1 show the differences between a weekly planning approach that does not account for varying daily volumes of customers and one that does, both using a standard that has a fixed and variable work component. This fixed and variable approach improves the accuracy of the work content as volume changes, especially on high volume days. Most work content in the service industry would usually follow a model aligned with this type of approach (fixed and variable parameters). Excess hours show the gap between equal volume days (Approach 3, similar to manufacturing) and the varying volume days of a service environment (Approach 4, hours in boldface type). Once again, a positive number means that the flat week has more labor hours than necessary on a specific day, while a negative number reflects understaffing.

The Gap line shows the difference in labor requirements based on the fixed and variable productivity rates and the minimum shift needs in the service environment depicted in Approaches 2 and 4.

The Better Production Rate line at the bottom of the chart compares the required hours calculated using Approach 2 (single variable production rate per unit) with Approach 4 (fixed base hours plus a variable production rate per unit).

The fixed and variable rate combination shown in Approach 4 yields 41 hours less for the sample week.

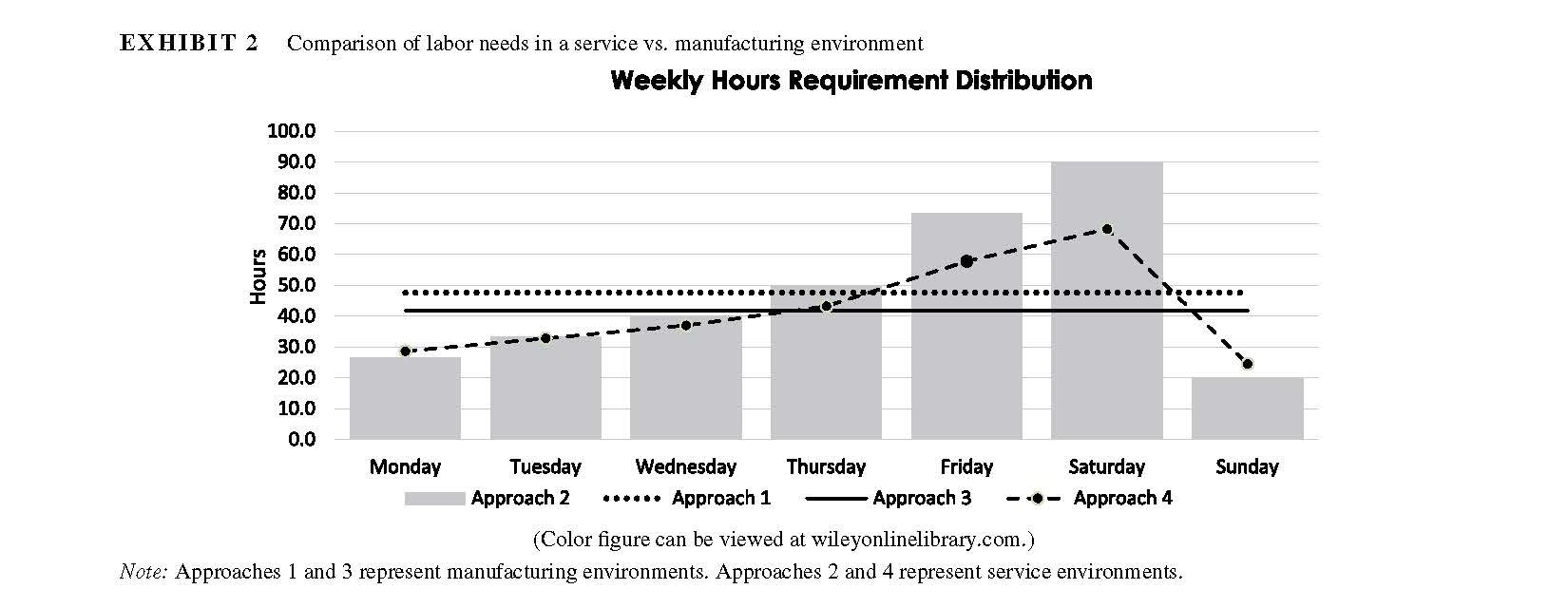

Exhibit 2 demonstrates the difference between an environment such as manufacturing, in which work is equally distributed throughout the week (Approaches 1 and 3), and an environment where demand differs by day of the week and, therefore, requires different staffing needs depending on the day (Approaches 2 and 4). In this example, labor demand peaks on Saturday and significantly falls off on Sunday—a scenario that is seen at some leisure-oriented properties in the hospitality industry.

Although the incredible volume of data to be considered can be enough to overwhelm the most competent manager, new technology and user-friendly dashboards have simplified the task of quickly acting on crucial information and adapting to it.

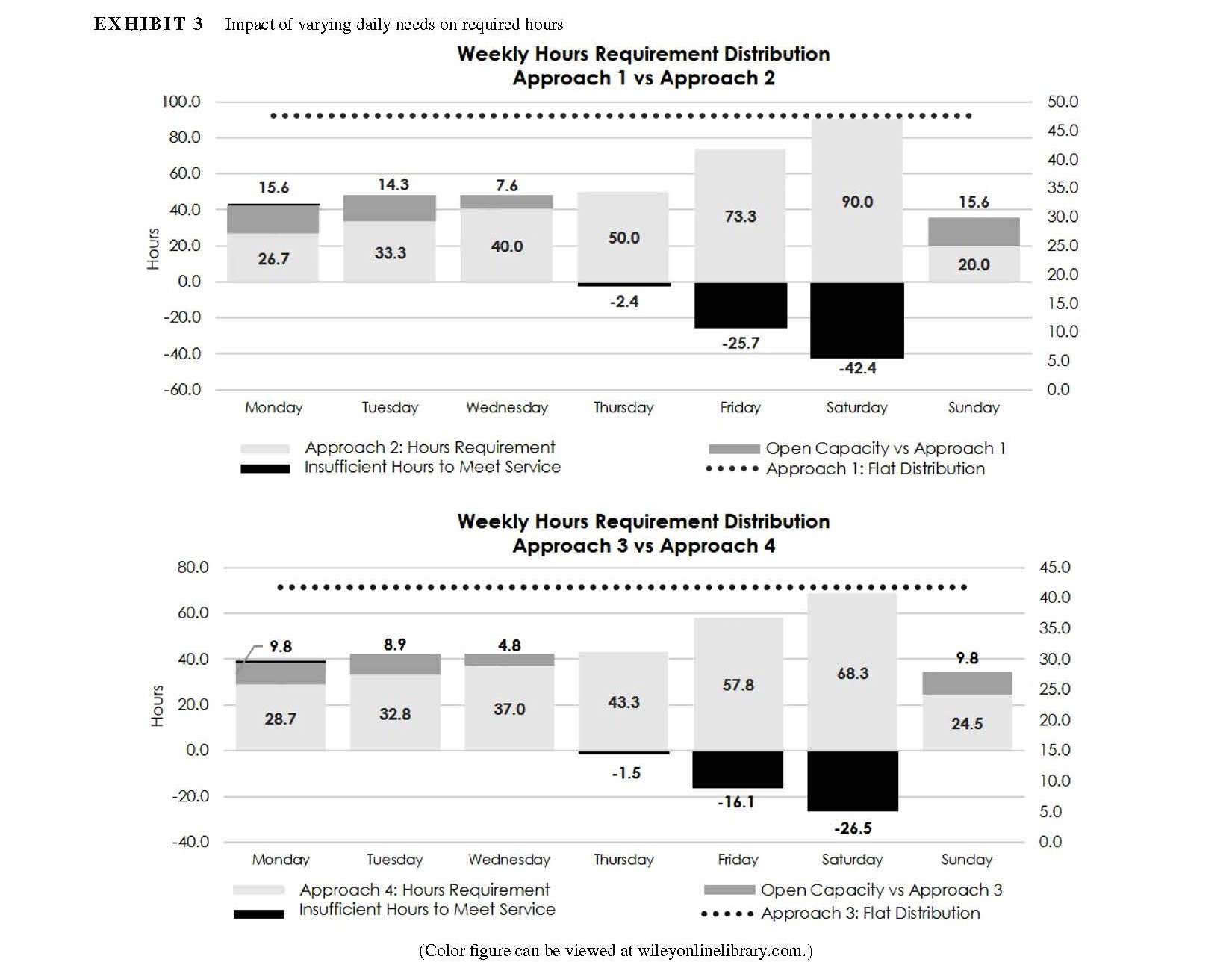

The two graphs in Exhibit 3 show the impact of varying daily needs on required hours (two different approaches to setting hour requirements). In cases where budgeted productivity is used to calculate weekly needs that are evenly spread over the days of the week (dotted line) in a service environment (changes in daily demand), some days have excess hours (open capacity) and others are understaffed (insufficient hours to meet service). This can have a negative impact on both costs and service quality.

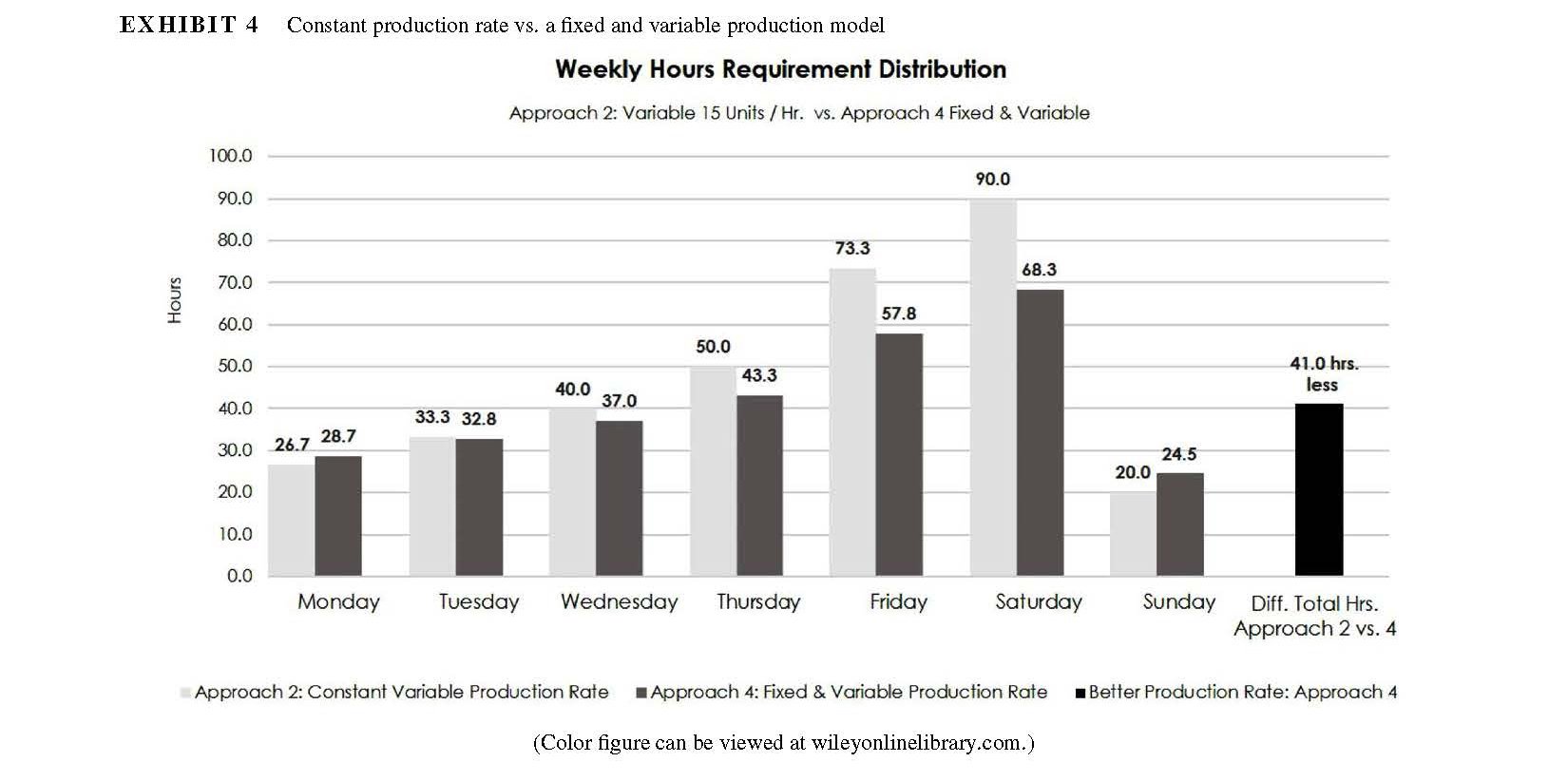

The illustration in Exhibit 4 (page 46) compares the impact of a generalized production rate of 15 units per hour (Approach 2) on required hours with a more accurate depiction of labor requirements—a fixed and variable component (Approach 4). The graph compares Approach 2 hours at the same volume as Approach 4. The more accurate method (Approach 4, with a fixed and variable component) in this example reduces labor needs by 40h, or one full-time employee over the week. The more accurate the formula is, the better the match of labor needs to volumes will be, most frequently creating a least-cost result with consistent customer service. This approach also incorporates economies of scale and shows that at higher volumes the rate of productivity is also better.

A fixed standard doesn’t account for economies of scale and will leave a service industry business with too few or too many labor hours on a particular day. An organization that makes daily decisions about its business based on monthly performance averages will find that about half the time they are overstaffed and the other half understaffed. At the end of the period, the number balances, but performance is not optimized—and that could have a negative impact on the organization’s future.

5 | DRIVING PERFORMANCE WITH CORRELATIVE DATA

In the 1970s and 1980s, the car manufacturing industry was characterized by a silo mentality. As a result, car models rolled off the assembly line with design flaws that would only later be discovered by their buyers. For instance, when a switch controlling the brake lights on a certain 1973 Jaguar malfunctioned, what should have been a simple fix became, instead, a major repair. Why? Because the only way for a mechanic to access and replace the switch was to remove the engine. No one on the design side had considered the impact of that switch placement on maintenance.

A similar mistake would be far less likely in today’s auto industry. Now, teams of engineers, service people, and designers work together to understand the impact of design decisions on the consumers and the total cost of ownership—opportunities to get auto design right the first time. And that’s the idea behind correlative data, a key component of solid data analysis.

Correlative modeling, or predictable cause and effect analysis, changes the value of various areas of a service organization’s operations. It lets managers frame decisions based on what investments they want to make in their business to create a positive impact, rather than on which costs they need to absorb. For example, an organization that is unhappy with the results of customer surveys might immediately focus on improving its staff training. This decision, however, may well be based on one-dimensional analysis. What if the problem from a service perspective is understaffing? Or poor product perception? Or a disengaged staff? Completing a correlative analysis of these factors in relation to customer perceptions would result in better investment decisions that will be more likely to generate desired results.

One large hospitality organization with both hotels and resorts is driving top-line results today using correlative data on employee engagement. The company developed a project model to show the impact on revenue of each one-point improvement in engagement. Not only are its managers seeing opportunities to increase room revenue as a result, but they also understand the cost benefits of investing in training to improve employee engagement. And since it is often said that people do what you inspect, not what you expect, sharing engagement data with staff can be used as a motivational tool to drive performance further.

Back in the 1980s, American auto manufacturers lagged far behind their Japanese counterparts in the number of hours it took to produce a car. To get to the leadership position they enjoy today, those U.S. firms had to not only reengineer their process, but also learn to measure them and directly relate them to profitability. That same change in mindset needs to happen in the service industry. Management needs to de-silo information to fully understand how one data point drives others that affect the overall business.

Correlative modeling, or predictable cause and effect analysis, changes the value of various areas of a service organization’s operations.

Kaplan and Norton (1992) addressed this need with their description of a new model of operations and a learning organization that reconfigured the silo structure into a circle. Applying that same approach to big data, one would create a series of concentric circles. In the middle might be optimized profitability or customer satisfaction, for example. Moving outward, each subsequent circle would represent an independent variable that the organization wants to measure in order to reach the optimal point at the center. As the organization’s leaders assess their business, they take a bull’s-eye approach: Which variables furthest from the center will drive the organization to the desired middle point?

Moving a service-oriented business from Point A to Point B requires watching every touchpoint in the customer experience. All the while, managers need to look into the causes and effects that underlie the data they collect in order to truly understand how to improve their business and their brand. With the wealth of data points available today, a systematic process to ensure that the organization’s vision doesn’t become muddled with extraneous information is essential.

REFERENCES

Kaplan, R., & Norton, D. (1992). The balanced scorecard—Measures that drive performance. Harvard Business Review, January–February, 71–79.

AUTHOR BIOGRAPHY

Mark Heymann is a founding partner and the chairman and CEO of UniFocus in Dallas, TX. One of the largest performance management firms in the service sector, UniFocus provides comprehensive workforce management systems and financial tools, including applications and services for labor management, time and attendance, and integrated survey solutions. Heymann brings to his position more than 40 years of expertise in the industry, particularly in hospitality. He can be reached at mheymann@unifocus.com.

Know someone trying to solve their workforce management problems? Share this post them! Or, if you have questions comments, leave them below.